Technical Value Assurance: The Missing Function in Enterprise Engineering

Why the gap between delivery and value is rarely a technical problem, and what it actually takes to close it.

The Signal That Never Gets Sent

In air traffic management, the safety of a flight is not guaranteed by the skill of a single pilot.

It is guaranteed by a system of independent oversight: Air Traffic Control confirming the route, the dispatcher validating fuel loads, the safety management system monitoring deviation in real time, and a structured incident review process that captures near misses before they become accidents. No single actor is trusted to self-certify their own correctness.

Enterprise engineering programmes rarely work this way.

A delivery team builds.

A product owner accepts.

Stakeholders hear status updates filtered through the lens of teams who are naturally incentivised to present progress, not problems.

There is no independent function whose purpose is to ask, consistently and structurally: is what we are building actually producing value, and are we carrying risks we have not yet named?

That function has a name. It is Technical Value Assurance. And its absence is one of the most reliable predictors of expensive programme outcomes.

What Technical Value Assurance Is Not

Before defining what it is, it is worth being precise about what it is not.

It is not a Programme Management Office generating status reports. It is not a Test Manager running regression cycles. It is not an architecture review board that meets quarterly to bless designs already in flight. It is not a quality gate that approves pull requests.

Each of those functions has its place. None of them answers the questions that Technical Value Assurance exists to answer:

Is the architecture we are building today going to hold the weight of what we need it to carry in twelve months? Are our delivery signals telling us the truth, or are they measuring the wrong things? Do we have risks in our system that no one has formally named? Is the value we are promising to the business traceable to the work we are actually doing?

These questions sit in a gap that most programme structures leave deliberately unoccupied, because answering them honestly is uncomfortable, and because the function costs investment that is hard to justify until the absence of it creates a crisis.

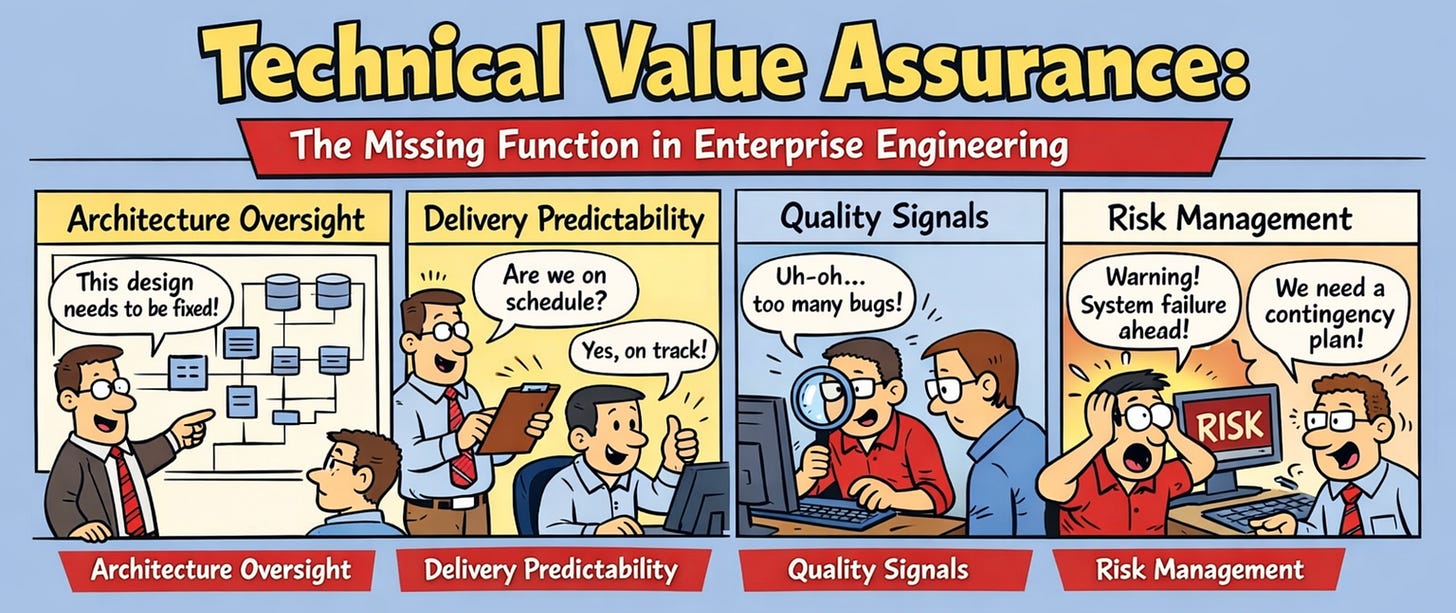

The Four Dimensions

Technical Value Assurance operates across four interrelated dimensions. In any programme where the surface area of impact spans multiple product teams and business domains, all four matter simultaneously.

Architecture Oversight

Architecture oversight is not architecture design. The design function belongs to the engineering team. Architecture oversight is the independent, ongoing assessment of whether the structural decisions being made remain coherent with the programme vision, with each other, and with the constraints that the real world is imposing as the programme evolves.

In practice, this means someone is continuously asking:

Have the decisions made in the last two sprints introduced structural debt?

Is the separation of concerns between platform layers holding in the way we intended, or are we accumulating shortcuts?

When a product team onboards and finds the self-service path insufficient, is that signal being fed back into the platform design, or is it being resolved with a one-off workaround that will proliferate?

A well-run platform should operate on the principle that if teams need to escape the designed golden path, that is a signal for platform evolution, not a support ticket to be closed. Architecture oversight is the function that hears that signal, validates it, and ensures it routes to the right decision-maker rather than disappearing into a backlog.

Without oversight, architecture drifts. Not dramatically. The first workaround is reasonable. The second is understandable. By the tenth, you no longer have an opinionated platform. You have a collection of exceptions that the team is quietly maintaining at the cost of every new onboarding.

The equivalent in complex operational systems is airspace design. The airways are not defined once and forgotten. They are continuously reviewed against actual traffic patterns, emerging constraints, new performance characteristics, and incident data. The structure must evolve, but it must evolve with intent. That intent cannot come from the actors who are already operating within the system.

Delivery Predictability

Delivery predictability is one of the most misunderstood concepts in enterprise engineering. Most programmes interpret it as: can we forecast when features will be done? That is useful but insufficient.

True delivery predictability asks a harder question: do we have structural confidence that the commitments we are making to the business are achievable, and do we have early enough visibility into when they are not?

The distinction matters because there are two fundamentally different types of delivery uncertainty. The first is execution uncertainty: the team knows what needs to be built, has the skills and capacity to build it, and the main variable is time. This is manageable with standard agile tooling. The second is structural uncertainty: the team is building something that has architectural unknowns, dependencies on external systems that are not yet confirmed, or foundational capabilities that need to exist before dependent features can be delivered. Standard velocity metrics are nearly useless for structural uncertainty because they measure progress on work that is already well-defined, which is precisely not the work that carries programme-level risk.

Technical Value Assurance maintains a structural dependency map. This means understanding, for instance, that self-service capabilities cannot be fully delivered until foundational pipeline stability is confirmed, that developer tooling ergonomics must reach a defined usability threshold before product team adoption is realistic, and that disaster recovery capabilities are a prerequisite for several teams to get business approval to go live. These dependencies are known. They are mapped. And they are actively tracked against the programme timeline, not discovered when a sprint fails to close.

This is the equivalent of pre-flight dispatch. Before any flight, the dispatch team checks not just whether the aircraft is serviceable, but whether every system required for the planned route and conditions is confirmed operational. Delivery predictability in engineering requires the same structured assessment of preconditions, not just progress.

Quality Signals

Quality in platform engineering is a deceptive concept. It is easy to measure the wrong things at high frequency and have complete confidence in a misleading picture.

Test coverage percentages, sprint velocity, deployment frequency, pull request merge rates: these are all useful signals within their scope. But none of them answers whether the platform is delivering the quality of experience that product teams actually need. None of them surfaces the schema that passed automated validation but carries a semantic error that will only manifest when a downstream consumer tries to build a real-time dashboard. None of them captures the fact that three separate product teams independently asked the same onboarding question in the same week, which is a signal that the documentation is missing something critical, not that the teams are confused.

Technical Value Assurance maintains a quality signal framework that spans three layers.

The first is the technical layer: what the automated tools tell you. Pipeline pass rates, error rates in shared registries, infrastructure drift detection, incident frequency and resolution time. These are necessary and should be automated into a platform health dashboard. Monitoring and logging platforms are not just operational tools. They are quality signal sources that feed into a systematic assessment of platform health.

The second is the experience layer: what product teams actually encounter. This requires deliberate collection. Office hours attendance, support ticket classification, time to first successful deployment for new onboarding teams, frequency of CLI or SDK error paths, number of times a product team asks a question the documentation should have answered. These signals require someone whose job is to aggregate and interpret them, not just respond to the individual instance.

The third is the architectural quality layer: the structural integrity assessment that no automated tool provides. Are there naming inconsistencies that slipped through convention validation? Are there component boundaries where autonomy assumptions are being violated because an upstream event was missing a required field? These signals only surface if someone is looking for them with architectural context.

The three layers together form a quality picture that is honest. Any one layer in isolation tells a partial story. Programmes that rely only on the technical layer produce dashboards that are green while the platform quietly fails the people depending on it.

Systemic Risk Management

Every engineering programme carries risks. Most programmes manage risks as a list: a RAID log, reviewed weekly, with mitigations assigned and statuses tracked. This is not systemic risk management. It is risk inventory management.

The distinction is that a risk inventory captures risks that have already been identified and named. Systemic risk management asks where risks are accumulating that have not yet been named, and what the structural conditions are that will generate future risk if left unchanged.

In a platform serving business-critical operations at scale, the stakes are concrete. Platform reliability requirements often reflect the genuine operational consequence of failure for the business depending on the infrastructure. Systemic risk management in this context means maintaining a continuous view of the conditions that could compromise that reliability, beyond the incidents that have already occurred.

Systemic risks in platform engineering typically appear in four patterns.

The first is concentration risk: critical knowledge or capability concentrated in one or two individuals, meaning that a single departure creates an operational gap. Most platform programmes address this structurally through knowledge transfer phases, but the risk does not manage itself. Someone must track which capabilities remain individual-dependent and ensure that knowledge transfer is closing that gap at the right pace.

The second is dependency drift: the platform depends on external systems, internal tooling, and third-party services whose roadmaps and behaviours change independently. Schema registries, identity and access management integrations, cataloguing platforms, and CI/CD toolchains all carry their own change cadence. Systemic risk management maintains a dependency change calendar and ensures that no external change reaches the platform as a surprise.

The third is scale debt: the platform performs correctly at current load but carries design assumptions that will not hold at ten times the product team count or five times the event or data volume. The deployment models, the service boundaries, and the governance processes all need to be assessed not just for today’s operational reality but for the two and three-year scale targets. Scale debt is invisible until it is catastrophic.

The fourth is governance erosion: the standards and conventions that make the platform coherent at scale are not automatically self-enforcing. Naming conventions, schema design standards, data retention policies, and structural taxonomy patterns all require active reinforcement. The risk is not that anyone deliberately violates them. The risk is that under delivery pressure, exceptions are made without formal review, and each exception normalises the next. Systemic risk management tracks governance exception rates and escalates when the trend line moves in the wrong direction.

The Organisational Reality

The honest reason Technical Value Assurance is missing from most enterprise engineering programmes is not ignorance. It is incentive structure.

Delivery teams are measured on velocity and feature completion.

Programme managers are measured on milestone adherence and stakeholder satisfaction.

Architects are often focused on design rather than continuous oversight.

There is no natural home in the standard programme structure for a function whose primary product is honest assessment of risk and value delivery, particularly when that assessment may be uncomfortable to hear.

The function also requires a rare combination of capabilities. It requires deep enough technical understanding to evaluate architecture and quality signals with credibility. It requires enough programme management experience to translate technical observations into business risk language. And it requires the organisational standing and independence to surface findings without those findings being filtered by the delivery teams being assessed.

Certain roles carry elements of this function naturally: a retained Technical Programme Area Manager providing operational assurance, a Principal Engineer holding the architecture oversight thread in steady state. But the full function is most powerful when it is explicitly named, resourced as an integrated discipline, and given both the access and the mandate to operate across all four dimensions continuously, not just at milestone gates.

What It Produces

The deliverable of Technical Value Assurance is not a report. It is a state of informed confidence.

Programme leadership knows, on any given day, whether the architecture remains coherent. Product teams know that the platform they are building on has been independently assessed for quality, not just self-certified. The business knows that the commitments being made are structurally grounded, not optimistic. And the engineering team knows that risks are being surfaced and managed at programme level, which means they can focus on delivery rather than carrying the anxiety of unspoken systemic concerns.

For any programme whose operational mandate is high reliability at scale, that informed confidence is not a luxury. It is the structural equivalent of independent oversight in any safety-critical system. The components can be perfectly designed, the engineers highly skilled, the delivery process well-run. Without an independent function continuously monitoring the whole system and surfacing what individual actors cannot see from their own positions, the risk does not disappear. It simply goes unmanaged.

Technical Value Assurance is how engineering programmes stop confusing the absence of visible problems with the presence of genuine safety.